index●comunicación

Revista científica de comunicación aplicada

nº 16(1) 2026 | Pages 37-68

e-ISSN: 2174-1859 | ISSN: 2444-3239

Persuasive Communication with AI: Personalization, trust and ethical challenges

Comunicación persuasiva con ia: personalización, confianza y retos éticos

Received on 26/05/2025 | Accepted on 26/10/2025 | Published on 15/01/2026

https://doi.org/10.62008/ixc/16/01Comuni

Aitor Gil García | Universidad Camilo José Cela

aitor.gil@ucjc.edu | https://orcid.org/0009-0005-1133-7842

África Presol Herrero | Universidad Camilo José Cela

apresol@ucjc.edu | https://orcid.org/0000-0002-7600-8231

Resumen: Este estudio analiza el impacto de la inteligencia artificial (IA) en la comunicación persuasiva en marketing digital, con énfasis en los procesos de personalización, la confianza del consumidor y los retos éticos asociados. Se aplicó un diseño exploratorio secuencial con enfoque metodológico mixto, integrando entrevistas semiestructuradas a responsables de marketing de 50 empresas y encuestas estructuradas a 500 consumidores. Los resultados muestran mejoras significativas en conversión, engagement y satisfacción, pero también revelan preocupaciones crecientes sobre privacidad y manipulación algorítmica. Se concluye que la integración de IA en estrategias de marketing debe incorporar supervisión humana y principios éticos sólidos. Esta investigación constituye una contribución científica innovadora al proponer un modelo responsable que integra eficacia persuasiva y ética mediante una aplicación transparente y centrada en el usuario de la inteligencia artificial.

Palabras clave: inteligencia artificial; comunicación persuasiva; marketing digital; personalización; ética algorítmica; confianza del consumidor.

Abstract: This study examines the influence of artificial intelligence (AI) on persuasive communication in digital marketing, with a focus on personalization, consumer trust, and ethical implications. A sequential exploratory mixed-methods design was applied, combining semi-structured interviews with marketing professionals from 50 companies and structured surveys with 500 consumers. Results show significant improvements in conversions, engagement, and satisfaction, but also an increase in concerns about privacy and algorithmic manipulation. The study concludes that integrating AI into marketing strategies necessitates human oversight and adherence to strong ethical principles. This research constitutes an innovative scientific contribution by proposing a responsible model that integrates persuasive effectiveness and ethics through a transparent and user-centered application of artificial intelligence.

Keywords: Artificial Intelligence; Persuasive Communication; Digital Marketing; Personalization; Algorithmic Ethics; Consumer Trust.

CC BY-NC 4.0

To quote this work: Gil García, A. & Presol Herrero, Á. (2026). Persuasive Communication with AI: Personalization, trust and ethical challenges. index.comunicación,

16(1), 37-68. https://doi.org/10.62008/ixc/16/01Comuni

1. Introduction

Artificial intelligence (AI) has shifted from being an auxiliary tool to becoming an active communicator within the digital ecosystem. Its ability to segment audiences, personalize content, and automate messages is transforming communication processes, partially displacing human authorship. This shift raises ethical and cultural challenges, as it introduces messages generated by systems that lack consciousness yet exert influence on meaning construction and social interaction (Kaplan & Haenlein, 2019).

Startups and small and medium-sized enterprises (SMEs), which have traditionally been limited by their resources, have found in AI an opportunity to compete on more equal terms with large corporations (Lee et al., 2023). Tools such as content automation, recommendation engines, and conversational chatbots optimize processes, reduce costs, and enable the design of hyper-personalized experiences tailored to each user's emotions, habits, and everyday decision-making (Sundar, 2020).

However, the potential of AI is not without friction. What happens when a system persuades a user without their awareness? How does this affect trust in the brand, consumer autonomy, or informed consent? In this study, AI-driven persuasive communication (or algorithmic persuasion) is defined as the strategic use of intelligent algorithms to deliberately influence users’ decisions or behaviors through large-scale automated message personalization by integrating processes of segmentation, recommendation, and continuous adaptation (Zarouali et al., 2022). Numerous studies warn of the risks associated with algorithmic opacity, automated biases, and the unethical use of data in digital environments (Floridi et al., 2018; Mittelstadt et al., 2016; Raji et al., 2020). All of this places AI at the center of a crucial debate: the need to build persuasive communication that is ethical, transparent, and human-centered.

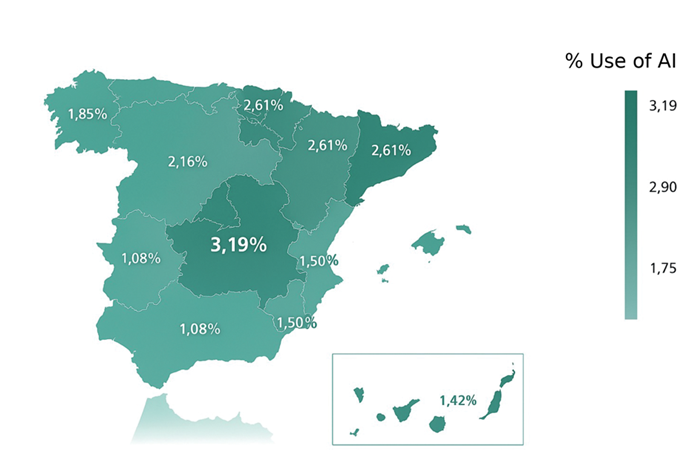

Figure 1. Percentage of artificial intelligence use among Spanish SMEs by autonomous community

Source: IndesIA (2024), Barometer

of AI Adoption in Spanish SMEs.

Original image extracted from the official report. Analysis based on secondary

data

(results from a national study).

Despite the growing academic and business interest in AI applied to marketing, a gap remains in the literature regarding how these technologies are being implemented within the Spanish business landscape. Furthermore, the communicative impact of such implementations is rarely examined from an approach that integrates operational, ethical, and educational dimensions. In this regard, the present study also contributes to the field of educommunication by analyzing how marketing professionals understand, adopt, and communicate about AI capabilities, and to what extent these practices align—or fail to align—with inclusive, critical, and conscious communication models (Nagamini & Aguaded, 2018).

In this context, the present study examines the impact of AI on the digital persuasive communication of Spanish startups and SMEs, assessing both its operational benefits and the ethical challenges it presents. To this end, a mixed-methods approach is adopted, specifically a sequential exploratory design (DEXPLOR-S), which begins with a qualitative phase to examine a complex phenomenon and subsequently validates and expands the findings through quantitative instruments (Creswell & Plano Clark, 2017; Hernández-Sampieri et al., 2014). Specifically, 50 semi-structured interviews were conducted with marketing and communication managers from real companies, complemented by structured surveys administered to 500 consumers who had interacted with AI-driven campaigns. The qualitative analysis was conducted using Atlas.ti, and the quantitative analysis was performed using Jamovi, allowing for a rigorous, transparent, and replicable treatment of the data. This methodological triangulation enables the comparison of corporate discourses and public perceptions, thereby providing an updated overview of the role of AI in persuasive communication.

The article is organized as follows: after this introduction, the theoretical framework is presented, including the main background, conceptual foundations, and research gaps. Next, the study's objectives and proposed hypotheses are outlined. Subsequently, the methodology employed is described, the results obtained are analyzed, and finally, the communicative, ethical, and strategic implications of AI use are discussed and offer conclusions and proposals for responsible implementation in business environments.

2. Theoretical Framework

The present theoretical framework is structured around four principal axes. First, it examines how it has become a crucial component of strategic communication between brands and their audiences. Next, it examines AI adoption in SMEs and startups, with an emphasis on common use cases and their contribution to process optimization and commercial strategy. Subsequently, it addresses algorithmic persuasion and its impact on public perception, as well as the ethics of digital communication, understanding AI not only as a resource but also as a new persuasive communicator in digital environments. Finally, it incorporates an educommunicative perspective, proposing a responsible conceptual model that balances persuasive effectiveness, ethics, and human values.

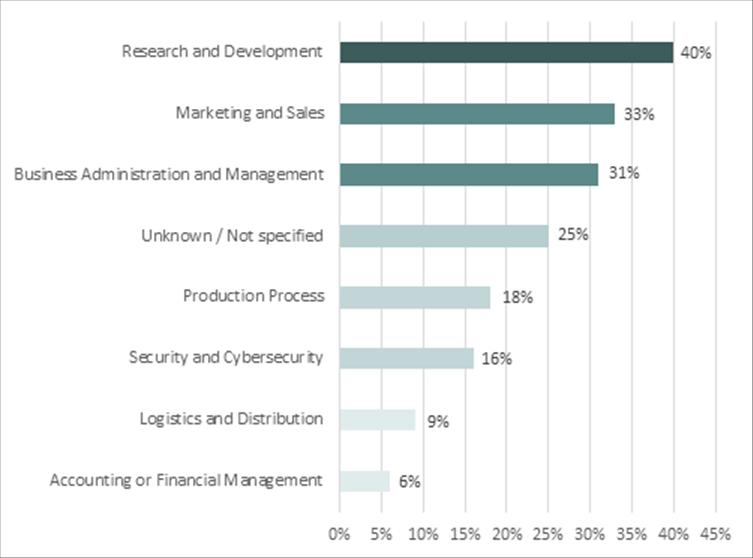

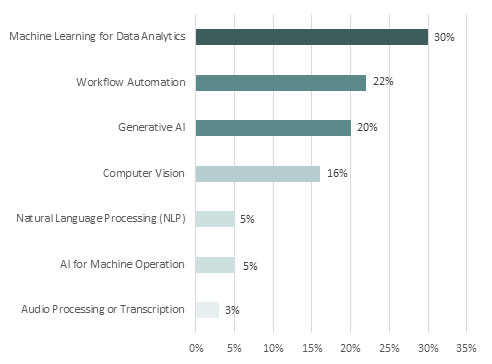

In this regard, it is pertinent to note how Spanish SMEs are utilizing artificial intelligence. Figure 2 illustrates the primary use cases, where marketing and sales occupy the second position (33%), followed by research and development (40%). These data reinforce the importance of studying the communicative impact of AI in emerging business contexts. Furthermore, Figure 3 illustrates which technologies are being used most frequently. Machine learning applied to data analytics (30%), workflow automation (22%), and generative AI (20%) predominate, all of which have a strong communicative component, particularly in processes such as personalization, automated content creation, and real-time decision-making.

Figure 2. Percentage of Spanish SMEs that use artificial intelligence by functional area

Source: IndesIA (2024). Barometer

of AI Adoption in Spanish SMEs.

Secondary data analysis from a sectoral study.

Figure 3. Percentage of Spanish SMEs using different artificial intelligence technologies

Source: IndesIA (2024). Barometer

of AI Adoption in Spanish SMEs.

Secondary data analysis from a sectoral study.

Recent studies confirm the progressive adoption of AI in SMEs and startups. In Spain, the IndesIA Barometer (2024) indicates that more than half of SMEs already use AI in marketing and digital communication. However, this development is not perceived uniformly. Torres-Toukoumidis et al. (2024) note that the social perception of AI varies according to technological familiarity, educational level, and the context of use. Therefore, it is essentialcomn to understand how different groups interpret algorithmic personalization: whether as an enhancement of the user experience or as a threat to privacy. This communicative dimension necessitates the development of strategies that integrate ethics, transparency, and user control, with the goal of fostering digital relationships founded on trust (Eubanks, 2018).

2.1. Artificial Intelligence and Strategic Communication

AI has ceased to be merely a support tool in the field of marketing and has become a strategic axis that is redefining communication between brands and audiences (Grewal & Roggeveen, 2017). Through advanced algorithms, companies personalize messages, anticipate user behaviors, and optimize interactions in real-time, transforming persuasion into an automated, dynamic, and highly adaptable process (Russell & Norvig, 2020). This shift results in substantial improvements in key metrics, including engagement, conversion, and customer loyalty (Kumar et al., 2021). While this evolution enhances the effectiveness of digital campaigns, it also raises challenges related to transparency, privacy, and user autonomy (Gunning & Aha, 2019). In this new environment, AI acts as an invisible interlocutor that learns, adapts, and evolves with each interaction. Persuasion no longer depends exclusively on human intent; instead, it becomes distributed between humans and algorithms capable of interpreting data, predicting reactions, and generating personalized communicative responses (Longoni et al., 2019).

2.2. Applications of AI in Startups and SMEs

For startups and SMEs, AI has become a key tool for improving competitiveness. These organizations, previously limited by their resources, now have access to technologies that were once only available to large corporations (Tadimarri et al., 2024). Currently, they can analyze data in real-time, automate strategic decision-making, and develop communicative strategies with a level of precision previously unattainable, without the need for significant upfront investments (Suraña-Sánchez & Aramendia-Muneta, 2024).

In Spain, significant improvements are being observed: for example, 61% of SMEs report increased customer conversion and retention after implementing AI solutions (IndesIA, 2024). However, this expansion raises new ethical and regulatory dilemmas. Regulation (EU) 2024/1689 —commonly known as the European AI Act— seeks to address these tensions by establishing a common framework for transparency and the protection of rights (European Union, 2024). In this regard, recent studies emphasize the need to balance innovation and responsibility: Ngo (2025) highlights that user trust depends on the transparency and comprehensibility of automated processes, while Sebastião and Dias (2025) advocate for the integration of robust regulatory frameworks to ensure the ethical application of AI in digital communication.

2.3. Algorithmic Persuasion, Public Perception, and Ethics

AI has become an active persuasive agent within strategic communication (Davenport et al., 2020). Its ability to segment audiences, automate campaigns, and adapt messages in real time has transformed the way organizations interact with their publics. Platforms such as Amazon, Netflix, and Spotify apply algorithmic hyper-personalization to anticipate consumption decisions and generate tailored experiences, consolidating AI as an «invisible interlocutor» that learns and evolves with each interaction (Lee et al., 2023; Sundar, 2020).

While these innovations enhance key indicators such as conversion and engagement (Kumar et al., 2021), they also generate ethical and perceptual tensions. Persuasive influence is now distributed between humans and algorithms (Longoni et al., 2019): while some consumers value algorithmic personalization positively, others perceive it as a threat to their privacy or autonomy (Afroogh et al., 2024; Torres-Toukoumidis et al., 2024). This complements Eubanks’ (2018) perspective, which emphasizes that digital trust depends not only on technical transparency but also on the degree of effective control that users feel they have over the system.

Recent research suggests that algorithmic transparency is crucial in establishing trust. For example, Laux et al. (2024) show that transparency mitigates the negative relationship between general attitudes toward AI and trust in organizations that use it. In the European Union, the AI Regulation (2024) promotes common principles of safety and explainability; however, authors such as Ngo (2025) and Eubanks (2018) argue that, beyond regulation, an ethical approach must also be cultural and communicative, centered on the user experience.

In summary, the deployment of AI in communication raises significant ethical tensions. Authors such as Fernández-Fernández and Pinillos (2011) warn that greater personalization increases the potential for manipulation, while Mittelstadt et al. (2016) show how algorithmic biases can reinforce social inequalities. Kaplan and Haenlein (2019) emphasize the need to design human-centered systems that are subject to accountability. These approaches suggest that the ethics of AI cannot be limited to the technical domain alone: it requires integrative frameworks that consider communicative, educational, and social dimensions to ensure that technologies are auditable and aligned with human values.

2.4. Educommunication and Integrative Theoretical Framework

The legitimacy of AI-mediated persuasive communication depends on ensuring conditions such as informed consent, the comprehensibility of the algorithmic process, and the availability of non-automated alternatives (Jauregui & Ortega, 2020). Beyond responding to legal requirements, these guarantees form part of a broader commitment to educommunication, understood as the promotion of critical digital literacy and citizen empowerment to sustain an ethical and conscious relationship with algorithms (Nagamini & Aguaded, 2018; Zozaya Durazo et al., 2022).

The integration of communicative, ethical, and educommunicative frameworks is essential for addressing algorithmic persuasion in a comprehensive manner. From persuasive communication, strategies of influence and effectiveness are contributed; from AI ethics, the principles of transparency, fairness, and accountability; and from educommunication, critical literacy and citizen empowerment in relation to technology. As summarized by Torres-Toukoumidis et al. (2024), the articulation of these three approaches not only links innovation and values, but also redefines the role of the user as an active agent in algorithmic governance, offering a model for AI implementation that considers both business objectives and audience rights.

3. Objectives of the Study

3.1. General Objective

The primary objective of this study is to examine the impact of artificial intelligence on digital persuasive communication, evaluating its ability to personalize messages, enhance the effectiveness of marketing strategies, and foster consumer trust. Additionally, it seeks to identify the principal ethical risks associated with its implementation, with particular attention to privacy, algorithmic manipulation, and communicative fairness.

3.2. Specific Objectives

Based on this general objective, the following specific objectives are proposed:

SO1. Analyze how startups and SMEs integrate AI into their persuasive communication and digital marketing strategies.

SO2. Evaluate the impact of AI on key metrics such as conversion, engagement, and customer satisfaction.

SO3. Explore consumer perceptions of personalized messages generated by AI systems.

SO4. Identify the main ethical risks associated with the use of AI in communication, including privacy, bias, and user autonomy.

SO5. Propose a responsible model for AI implementation that combines communicative effectiveness, human supervision, and ethical principles.

3.3. Research Hypotheses

Based on the theoretical review and the objectives of the study, the following research hypotheses are formulated:

H1. The implementation of artificial intelligence systems in digital marketing significantly improves conversion rates in startups and SMEs.

H2. The use of AI for message personalization increases customer engagement and satisfaction.

H3. A positive consumer perception of AI is associated with high levels of data transparency and ethical communication practices by brands.

H4. The absence of human supervision in AI-based systems generates consumer distrust and reduces the communicative effectiveness of these systems.

H5. The adoption of a hybrid model that combines artificial intelligence with human oversight strengthens consumer trust and improves the quality of the brand–audience relationship.

4. Methodology

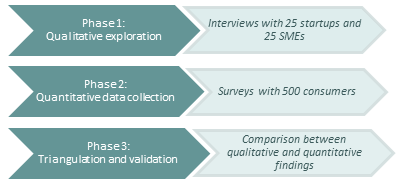

This study adopts a mixed-methods approach, specifically a sequential exploratory design (DEXPLOR-S), with the aim of comprehensively analyzing the impact of AI on the persuasive communication strategies developed by startups and SMEs. This approach combines qualitative and quantitative methods, allowing for the evaluation of both objective indicators—such as conversion, engagement, and degree of personalization—and subjective perceptions, attitudes, and barriers to the adoption of AI-based systems. The fieldwork was conducted between July 2024 and January 2025. The methodological sequence begins with a qualitative phase aimed at exploring in depth the practices, strategies, and concerns of marketing and communication managers. Based on the emerging findings, a subsequent quantitative phase was designed to validate and expand them through a structured survey administered to consumers (Creswell & Plano Clark, 2017). This design enables effective data triangulation and strengthens the analytical validity of the study by complementarily integrating the perspectives of the business environment and the user experience (Torres-Toukoumidis et al., 2024).

4.1. Research Design and Methodological Approach

In the qualitative phase, interviews were conducted with 50 marketing and communication managers from 25 startups and 25 Spanish SMEs. The sample size was established to balance sectoral diversity and analytical depth, and the selection was carried out through purposive sampling, prioritizing organizations with documented experience in applying artificial intelligence to communication. The interviews were conducted between September and November 2024 (with an average duration of approximately 30 minutes), recorded, transcribed, and analyzed thematically (Yin, 2017; Bryman, 2016) using inductive coding with Atlas.ti. The instruments were previously validated by experts in digital communication and data ethics. Based on the inclusion criteria proposed by Yin (2017), the selected companies met the following requirements: operating in Spain; being officially classified as a startup or SME (European Commission, 2020); having used AI in the last two years in marketing or customer service; providing public evidence of such use (websites, press, or sectoral databases); and applying AI for communicative purposes (e.g., personalization, chatbots). Cases in which AI was applied solely to internal processes not related to external communication were excluded.

In the quantitative phase, a survey was administered to 500 digital consumers residing in Spain using quota sampling (age, gender, educational level, and digital experience). All participants had previously interacted with AI-assisted marketing campaigns. The questionnaire, validated by expert judges, measured variables such as trust in AI-based systems, perceived personalization, privacy evaluation, and communicative effectiveness. Statistical analysis was conducted using Jamovi 2.4, employing Pearson correlations, exploratory factor analysis, and multiple linear regression analyses. The instrument demonstrated high internal consistency (Cronbach’s α = 0.81). Descriptive analysis revealed that 52% of respondents were women and 48% were men; 63% were between 25 and 45 years old; 78% resided in urban areas; and 67% had completed university studies.

Among the main limitations of the study are the use of convenience sampling (digitally active users) and the post-pandemic timing of the qualitative data collection, which may have influenced certain perceptions. However, the expert validation of the instruments and methodological triangulation mitigate these biases and strengthen the credibility of the findings. Beyond the quantitative results, this mixed-methods approach enabled an understanding of how technology and people interact in real-world contexts, analyzing how professional and public perceptions shape both the effectiveness and legitimacy of automated strategies. Figure 4 presents a diagram of the sequential methodological design (DEXPLOR-S) employed in this study.

Figure 4. Methodological design of the study: sequential exploratory mixed-methods approach (DEXPLOR-S)

Source: Own elaboration. Analysis: schematic representation of the sequential methodological process of the study (qualitative phase, quantitative phase, and triangulation).

4.2. Study Sample

The study sample comprises 50 Spanish companies, evenly divided between 25 startups and 25 SMEs, selected based on their documented application of artificial intelligence in communication and digital marketing processes. The inclusion of both profiles responds to the interest in analyzing the impact of AI across different levels of organizational maturity, under the premise that although startups and SMEs present differentiated structures, they share common challenges in adopting disruptive technologies applied to persuasive communication.

From a technical standpoint, an SME is defined — according to the European Commission (2020) — as any company with fewer than 250 employees and an annual turnover of less than € 50 million. Meanwhile, a startup or emerging company is understood as a recently created organization with a technological base, high scalability potential, and operations in contexts of uncertainty and intensive innovation (Ries, 2011). This differentiation enables the comparison of AI application in structurally distinct contexts, ranging from established business models to emerging environments.

In this study, both profiles were integrated for three main reasons:

1. Because they represent strategic sectors within the Spanish business ecosystem and are involved in key digital transformation processes (Red.es, 2023).

2. Because both types of companies share needs related to communicative efficiency, customer acquisition, and loyalty—areas in which AI can offer competitive advantages.

3. Because comparing startups and SMEs allows the observation of differences and similarities in AI adoption from communicative, technological, and ethical perspectives.

The specific characteristics of this sample are presented in the following subsections.

4.2.1. Sample of Selected SMEs

The first part of the qualitative sample comprises 25 Spanish SMEs that implement artificial intelligence solutions specifically applied to communication, digital marketing, or automated customer service. All of them meet the inclusion criteria established in the study's methodological design and were validated through verifiable public sources.

The selection was carried out by prioritizing sectoral diversity (health, legaltech, energy, mobility, fintech, sustainability, among others), as well as ensuring a balance between different business models (B2B, B2C, and hybrid). Likewise, efforts were made to represent different levels of technological maturity and AI implementation, ranging from companies that employ basic conversational tools or automated CRMs to those that apply machine learning algorithms for predictive segmentation, dynamic content personalization, or lead scoring.

This group of SMEs enables the analysis of how consolidated organizations — although limited in resources compared to large corporations — are incorporating advanced technologies to optimize their persuasive communication, enhance customer experience, and strengthen their competitiveness in digital environments.

The detailed information on the selected SMEs, including name, sector, business model, and type of AI-based technology used, is presented in Appendix 1.

4.2.2. Sample of Selected Startups

The second half of the qualitative sample comprises 25 Spanish startups that utilize artificial intelligence in their communication strategies, digital marketing, content personalization, or automated customer service. They were selected through theoretical–purposive sampling, following the same inclusion criteria detailed in Section 4.2, and verified using updated public sources.

In contrast to SMEs, startups operate in highly volatile environments, with a clear focus on scalability, accelerated growth, and intensive innovation (Ries, 2011). In this sense, their inclusion in the sample allows for the observation of how these companies integrate AI from early stages as a key strategic component to attract customers, automate communication processes, and generate competitive advantages in the market. The sample meets the previously defined inclusion criteria, particularly regarding the existence of current, public, and verifiable evidence of AI usage for communicative purposes. Sectoral diversity was prioritized —including digital health, fintech, tourism, retail, legaltech, and education, among others— as well as the inclusion of different technological approaches: from natural language generative models and conversational assistants to computer vision systems, predictive segmentation algorithms, or personalized recommendation engines. This portion of the sample enables the study to examine how AI is conceived not only as an optimization tool, but also as a core axis of communicative innovation from the very inception of each project. Detailed information on these startups —including name, sector, business model, and type of AI technology employed— is presented in Appendix 2.

4.2.3. Profile of Interviewed Participants

As part of the qualitative phase of the study, semi-structured interviews were conducted with professionals who held direct responsibility for marketing, communication, or digital strategy within the selected companies. The profiles were identified through public sources —such as LinkedIn, corporate websites, and specialized media— and selected based on their involvement in the design, implementation, and evaluation of artificial intelligence-based strategies.

The interviews, conducted online, had an average duration of 25 to 40 minutes and were structured into five thematic blocks: AI adoption, tools used, perceived impact on performance metrics, ethical risks, and the role of AI in persuasive communication, while maintaining flexibility to explore emerging issues. Of the participants, 54% were women and 46% men, with a mean age of 41.8 years (ranging from 29 to 59). Regarding education, more than 70% held postgraduate studies in communication, marketing, or STEM fields. The sectoral distribution of interviewees proportionally reflects the business profiles represented in the overall sample.

To ensure the privacy of informants and comply with ethical principles for qualitative research, all data are presented in anonymized form. The thematic analysis was conducted using open and axial coding techniques, following established protocols for qualitative analysis (Yin, 2017; Bryman, 2016). Atlas.ti (v.23) was used to organize codes, detect emerging patterns, and establish relationships between thematic categories. Detailed information on interviewee profiles is provided in Appendix 3 as part of the methodological transparency of this phase of the study.

4.3. Structured Consumer Surveys: Perception, Trust, and Ethics

As a complement to the qualitative phase, a quantitative study was carried out through structured surveys administered to a sample of 500 consumers who had interacted with digital marketing campaigns driven by artificial intelligence. The aim of this phase was to empirically contrast the findings obtained from the interviews with company representatives and, in particular, to explore how audiences perceive persuasive strategies mediated by AI.

Data collection took place between November 2024 and January 2025. The questionnaire was designed based on previous studies on algorithmic perception, communicative ethics, and digital trust (Torres-Toukoumidis et al., 2024; Floridi et al., 2018). It included closed-ended questions, Likert-scale items, and multiple-choice options organized around the following key dimensions:

a) Awareness of AI use in commercial communication.

b) Degree of acceptance of algorithmic personalization.

c) Perceptions of transparency and manipulation.

d) Trust in message automation.

e) Valuation of human supervision in AI systems.

f) Concern over the use of personal data.

The questionnaire underwent expert validation to ensure the internal consistency of the measurement scales. The sample was obtained through non-probabilistic convenience sampling, selecting digital users who had recently been exposed to automated campaigns from emerging or medium-sized companies. Data analysis was conducted using the statistical software Jamovi (version 2.4), applying descriptive analysis, bivariate correlations, binary logistic regression, and exploratory factor analysis (EFA) to identify latent patterns in consumer perception and trust (Hair et al., 2019). Demographic and contextual variables were included to segment results by gender, age, employment sector, and level of digital literacy.

Integrating this quantitative phase within the sequential exploratory design enabled the validation of the proposed hypotheses and the enrichment of the analysis with data directly obtained from citizens. This approach provided a complementary perspective on how automated persuasion is interpreted from the receiver’s point of view, and offered relevant insights into the factors that reinforce—or erode—consumer trust in intelligent systems.

The rigor of the study was ensured through thematic coding protocols, statistical quality controls, and triangulation of sources, thereby consolidating the validity and reliability of the results presented (Hernández-Sampieri et al., 2014).

5. Analysis of Results

This section presents the findings derived from the analysis. The results are organized into eight thematic blocks that correspond to the objectives and hypotheses of the study, addressing aspects ranging from the strategic purposes of AI adoption to its operational, ethical, and perceptual impact. This structure facilitates a comparative analysis between corporate perspectives and public perception.

5.1. Adoption and Strategic Objectives of AI Use

The results obtained from the qualitative interviews indicate that startups and SMEs are primarily adopting artificial intelligence with a strategic logic focused on optimizing their communication processes. This implementation enables the automation of repetitive tasks, the personalization of content, and improved efficiency in customer acquisition and retention.

In particular, the most commonly used tools include conversational chatbots, recommendation systems, marketing automation platforms, and predictive segmentation algorithms. These resources have been incorporated both as a response to operational constraints —in the case of many SMEs— and as a means to enhance scalable business models in highly competitive environments —in the case of startups.

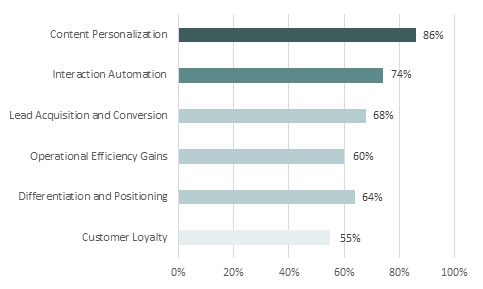

As shown in Figure 5, the strategic objectives most frequently cited by the marketing managers interviewed revolve around three main axes: user experience personalization (86%), interaction automation (74%), and the improvement of metrics such as lead acquisition and conversion (68%).

Figure 5. Percentage of startups and SMEs

mentioning different strategic objectives

in the adoption of artificial intelligence

Source: Own elaboration based on fieldwork. Analysis: thematic coding and frequency count of mentions in qualitative interviews.

This hierarchy reveals a clear prioritization of operational functions over more relational objectives, such as customer loyalty (55%) or strategic differentiation (64%). This suggests that, although AI is recognized as a powerful tool for optimizing processes and reducing costs, its potential to build long-term relationships or generate brand identity has not yet been fully developed in these organizations. Thus, the logic of adoption appears to be more functional than transformational: efficiency is prioritized over communicative reinvention or narrative innovation.

5.2. Impact on Key Metrics: Engagement, Conversion,

and Personalization

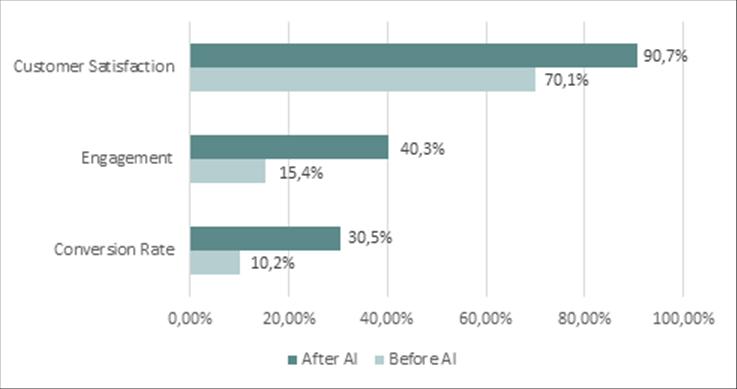

The integration of AI into persuasive communication strategies has had a positive impact on key performance indicators, including conversion rates, engagement levels, and customer satisfaction. These effects were supported by both the marketing professionals interviewed and the consumers surveyed. From the business perspective, process automation and algorithmic personalization are associated with significant improvements in the performance of digital campaigns. Tools such as recommendation engines, intelligent segmentation systems, and conversational chatbots have enabled the customization of messages to meet the specific needs of each user, thereby optimizing interaction and commercial return.

As shown in Figure 6, most participating companies reported noticeable increases in their conversion rates and engagement levels following the implementation of AI. Likewise, the surveys indicate a clear improvement in customer perceptions of the digital experiences offered by these brands. However, when analyzing the data in detail, the most significant increase is observed in conversion rates (from 10.2% to 30.5%), suggesting an intensive use of AI for commercial efficiency rather than for emotional relationship-building. Engagement, although improved (from 15.4% to 40.3%), remains below desirable levels, which may indicate that algorithmic interaction still struggles to establish and sustain connections with users. Customer satisfaction, although high (from 70.1% to 90.7%), may be driven by improvements in usability and personalization, but does not necessarily imply long-term trust or loyalty.

Figure 6. Variation in performance and customer

experience metrics before and after

the use of AI (n = 500)

Source: Own elaboration based on consumer survey data. Comparative analysis before and after the implementation of artificial intelligence.

These findings suggest a more nuanced perspective on the initial enthusiasm surrounding the technical benefits of AI: although there are objective improvements, they do not, by themselves, guarantee a strong consumer relationship.

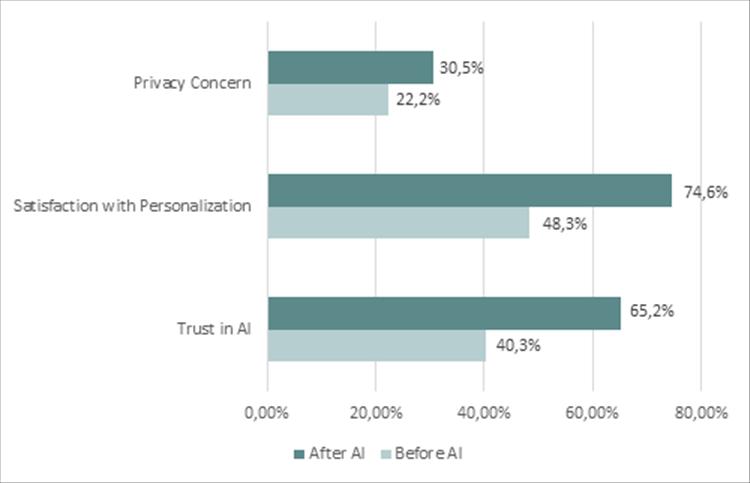

5.3. Transparency, Trust, and Public Perception

In addition to the operational impact, the study examined how consumers perceive artificial intelligence in their relationship with brands, particularly in terms of trust, personalization, and the protection of personal data. While algorithmic personalization is viewed positively in many cases, it also generates concerns when it is not accompanied by transparency, oversight, or informed consent. Figure 7 summarizes the changes in public perception resulting from interactions with AI-driven communication strategies.

Figure 7. Comparison of consumer perception before and after interacting with AI-based communication strategies (n = 500)

Source: Own elaboration based on data collected through consumer surveys. Analysis: pre-/post-interaction comparison with AI (survey results).

The results show a significant increase in satisfaction with personalization (from 48.3% to 74.6%) and in overall trust in AI-based systems (from 40.3% to 65.2%). However, concern about privacy also increased, rising from 22.2% to 30.5%.

This simultaneous increase in both trust and concern reveals a key paradox: although algorithmic interactions are perceived as useful and pleasant by many consumers, they do not fully dispel apprehension regarding the use of personal data. The fact that privacy concerns rise in a context where satisfaction also improves suggests that users value the functionality but remain wary of the processes that enable it. In other words, they enjoy the outcome, but still do not fully understand—or accept—the technological means involved.

This ambivalence highlights an information and trust gap that companies must address if they aim to build sustainable relationships with their audiences. It is not enough to offer personalized experiences; they must also be understandable, auditable, and ethically justified.

5.4. Perceived Risks and Ethical Tensions in Automated Communication

As AI-based marketing strategies become more widespread, relevant ethical tensions also emerge. Both professionals and consumers expressed concerns about the potential adverse effects of persuasive automation, particularly when it is deployed without supervision or clear ethical frameworks.

One of the most frequently mentioned risks was the possibility of emotional manipulation. Several interviewees warned about the potential for AI to influence consumer decisions excessively, especially when messages are personalized using sensitive data or targeted at vulnerable audiences.

From the consumer side, 30.5% expressed concern about the opacity in data use, and 26% reported discomfort when uncertain about whether they were interacting with a machine or a human. These responses highlight a lack of algorithmic transparency and underscore the need to ensure informed consent and auditability.

Another area of concern is the lack of human oversight: only 18% of companies reported having internal review mechanisms for their AI systems. This absence increases the risks of bias, decontextualization, and limited institutional accountability.

Ultimately, the legitimacy of AI in communication depends not only on its technical effectiveness but also on the structure of the relationship with the user. Trust requires not only algorithmic accuracy, but also principles of fairness, clarity, and respect for digital rights.

5.5. Divergences Between Corporate Discourse and Consumer Perception

A key contribution of this study is the triangulation of perspectives between professionals and consumers, which enables the identification of both convergences and divergences regarding the use of artificial intelligence in persuasive communication.

From the corporate side, more than 80% of the marketing managers interviewed expressed enthusiasm about the capacity of AI to improve efficiency, reduce costs, and optimize campaigns. Algorithmic personalization was valued as a tool to foster customer loyalty, increase conversion, and strengthen brand positioning.

Consumers, however, adopt a more nuanced perspective. Although 74.6% reported satisfaction with personalized experiences, 30.5% expressed concern about the use of personal data, and 26% distrusted automated interactions that were not explicitly identified as such.

Furthermore, a difference emerges in the very notion of trust: for companies, trust is built through message relevance; for consumers, it is built through transparency, consent, and control over the interaction. These differences show that technology does not automatically align the values of senders and receivers. For AI in marketing to be legitimate, it must be developed through a bidirectional approach that balances commercial objectives with citizens’ rights and expectations.

Table 1. Divergences between corporate discourse and consumer perception

|

Aspect |

Companies |

Consumers |

|

Main objective |

Optimize campaigns and reduce costs |

Improve experience and attention |

|

Perception of AI |

Strategic opportunity |

Useful technology, but ambivalent |

|

Trust |

Efficiency and performance |

Depends on clarity and control |

|

Transparency |

Low priority in corporate discourse |

High priority; request explanations |

|

Perceived risks |

Little explicit concern |

Concern

about privacy and |

|

Human oversight |

Not always considered |

Expect a visible and perceptible human presence |

Source: Own elaboration. Analysis: qualitative comparison of perceptions between corporate discourse and the public.

5.6.

Key Factors for User Trust and Ethical Conditions

for Adoption

Algorithmic transparency emerges as one of the most highly valued elements. User trust increases when brands clearly communicate the use of AI, the data employed, and the objectives of automation. Conversely, the absence of such communication generates distrust, particularly among users with lower levels of technological familiarity.

Another fundamental component is active human oversight. Knowing that there are people monitoring and validating algorithmic decisions significantly increases the legitimacy of the process and enhances user reassurance. Likewise, participants expressed the need for genuine informed consent, going beyond the typical legal checkbox. Users expect platforms to offer clear options to deactivate recommendations or limit data usage, thus fostering a sense of control.

On an ethical level, it is considered crucial to avoid manipulative practices. Persuasive design should respect individual autonomy, refraining from exploiting emotional vulnerabilities—especially in sensitive sectors such as health, finance, or education.

Finally, both companies and consumers emphasize the need for greater ethical and media literacy training to help understand the scope, risks, and limitations of AI. Only one in five professionals interviewed reported having received any ethical training related to the use of AI, which highlights a concerning gap in professional preparation. These factors form the foundation for the responsible implementation model proposed in the following section.

5.7. Proposal for a Responsible Implementation Model

Based on the results obtained, a reference model for the ethical implementation of AI in marketing and persuasive communication is proposed, designed explicitly for startups and SMEs. Its progressive adoption can not only improve metrics such as conversion and engagement, but also strengthen trust-based relationships with audiences, fostering more responsible brands aligned with educommunicative principles.

Table 2 summarizes the five strategic pillars that form this responsible implementation model, integrating both qualitative and quantitative findings from the study. Each pillar represents a key dimension—transparency, human oversight, informed consent, ethical persuasion, and critical training—aimed at striking a balance between communicative effectiveness and social responsibility in the use of AI.

Table 2. Ethical AI model for digital communication environments

|

No. |

Strategic Axis |

Description |

|

1 |

Proactive transparency |

Explicitly

inform users about the use of AI |

|

2 |

Integrated human oversight |

Include

responsible personnel to monitor, |

|

3 |

Genuine informed consent |

Design systems that enable users to determine how their data is utilized. |

|

4 |

Applied ethical persuasion |

Avoid

emotional manipulation or deceptive |

|

5 |

Critical training and culture |

Train teams and users in ethical digital literacy and responsible AI practices. |

Source: Own elaboration. Analysis: synthesis of qualitative and quantitative findings of the study (model derived from results).

5.8. Final Synthesis of Results: Connection Between Objectives, Hypotheses, and Findings

This subsection presents a structured integration of the study's main objectives, the findings derived from the empirical work, and their connection to the formulated hypotheses, enabling a critical reading of the article's scientific contribution. Likewise, the interpretation of the results is reinforced through visual analysis and the data shown in the previous figures.

Table 3 outlines the relationship between the study’s objectives, the hypotheses tested, and the most relevant empirical findings, providing a synthetic view of the internal coherence between the qualitative and quantitative phases of the methodological design.

Table 3. Relationship Between Study Objectives,

Empirical Findings,

and Tested Hypotheses

|

Study Objective |

Associated Hypothesis |

Main Findings |

|

SO1. Analyze how startups and SMEs integrate AI into their persuasive communication and digital marketing strategies. |

H1. The implementation of AI systems in digital marketing significantly improves conversion rates in startups and SMEs. |

86% of companies use AI to personalize content, 74% to automate interactions, and 68% to optimize conversion metrics. This confirms a strategic use oriented toward efficiency rather than communicative innovation. |

|

SO2. Evaluate the impact of AI on key metrics such as conversion, engagement, and customer satisfaction. |

H2. The use of AI for message personalization increases engagement and customer satisfaction. |

Conversion rates increased from 10.2% to 30.5%, engagement from 15.4% to 40.3%, and customer satisfaction from 70.1% to 90.7%, confirming significant improvements after integrating AI into campaigns. |

|

SO3. Explore consumer perceptions of AI-generated personalized messages. |

H3. Positive perceptions of AI are associated with high levels of transparency and ethical communication from brands. |

74.6% of consumers valued personalization positively, although 30.5% expressed concerns about privacy. Trust in AI increased from 40.3% to 65.2%, indicating acceptance conditioned by transparency. |

|

SO4. Identify the main ethical risks associated with the use of AI in communication, including privacy, bias, and user autonomy. |

H4. The absence of human supervision in AI-based systems generates distrust and reduces communicative effectiveness. |

Only 18% of companies have internal review mechanisms for their AI systems. 30.5% of users expressed concern about data opacity, and 26% about not knowing whether they were interacting with humans or machines. |

|

SO5. Propose a responsible AI implementation model that combines communicative effectiveness, human oversight, and ethical principles. |

H5. The adoption of a hybrid model combining AI with human control strengthens consumer trust and improves brand–audience relationships. |

A responsible implementation model based on transparency, supervision, and critical literacy is validated, balancing persuasive effectiveness and communication ethics. |

Source: Author’s elaboration

based on qualitative and quantitative results

obtained from interviews (n = 50) and surveys (n = 500).

Analysis: Interpretive integration through data triangulation.

The synthesis presented in Table 3 establishes a direct connection between the study objectives, findings, and hypotheses, demonstrating the methodological coherence of the sequential design and the empirical robustness of the results. Based on this integration, the following section presents the general conclusions of the study, further developing the discussion regarding the fulfillment of the main research objective and the validation of the specific objectives proposed.

6. Conclusions

This research has enabled a comprehensive analysis of the impact of artificial intelligence on persuasive communication in Spanish startups and SMEs. The study addressed essential dimensions, including content personalization, campaign efficiency, user trust, the risks associated with persuasive automation, and the need to incorporate ethical frameworks into technological design. This final section synthesizes the main findings, responding to the general objective, the specific objectives, and the hypotheses formulated.

6.1. Fulfillment of the General Objective

The primary objective of this study was to examine the impact of artificial intelligence on digital persuasive communication, considering its ability to personalize messages, enhance marketing effectiveness, and promote trust, as well as its associated ethical risks, particularly in relation to privacy, manipulation, and fairness.

The findings indicate that AI has evolved from a merely operational tool to a strategic resource in the relationship between brands and audiences. This aligns with Longoni et al. (2019) and Floridi et al. (2018), who argue that trust in automated communication depends more on ethical perception than on technical performance.

Consistent with Afroogh et al. (2024) and Sebastião & Dias (2025), this study confirms that the acceptance of persuasive AI is grounded in three key pillars: transparency, human oversight, and informed consent. Although startups and SMEs achieve improvements in efficiency and personalization, user trust does not derive solely from algorithmic sophistication, but also from the level of explainability and accountability perceived in its implementation. Thus, technical effectiveness does not automatically guarantee communicative legitimacy. Comparative international analyses, such as those by Ngo (2025) in Asia and Laux et al. (2024) in Europe, reveal a convergent global trend that emphasizes algorithmic transparency as a central element of digital trust.

In sum, AI enhances the effectiveness of persuasive communication, but its legitimacy depends on the integration of ethical and normative principles that ensure explainability and respect for digital rights. This finding reinforces the need to align business practices with regulatory frameworks such as the European AI Act (Regulation 2024/1689), which promotes transparency and human oversight in AI systems applied to communication.

6.2. Fulfillment of the Specific Objectives

The analysis of the five specific objectives provides a critical perspective on how Spanish startups and SMEs are incorporating artificial intelligence into persuasive communication, integrating ethical, technological, and communicative dimensions.

First, findings related to technological integration (SO1) confirm that AI adoption is uneven between startups and SMEs (Sebastião & Dias, 2025). Startups tend to frame AI as a structural advantage from early stages, whereas SMEs adopt it primarily for operational efficiency. This pattern aligns with international evidence linking organizational maturity to ethical understanding of automation (Ngo, 2025; Laux et al., 2024).

Second (SO2), regarding operational impact, AI shows a positive correlation with conversion, engagement, and customer satisfaction indicators (Afroogh et al., 2024). However, as Longoni et al. (2019) caution, technical effectiveness loses value when users perceive a lack of transparency or human oversight. In this study, quantifiable improvements translated into communicative legitimacy only when consumers perceived control and a clear explanation.

Regarding consumer perception (SO3), the results indicate that personalization is broadly accepted when the origin and purpose of the messages are clear. Consumers value AI to the extent that they understand how it works and retain the ability to shape their experience (Ngo, 2025), confirming that algorithmic transparency is a necessary condition for the acceptance of AI in marketing.

The fourth objective, addressing ethical risks (SO4), highlights persistent concerns related to emotional manipulation, opaque data practices, and the lack of human review. In line with Floridi et al. (2018), AI ethics must shift from purely technical responsibility toward communicative responsibility: the absence of ethical training and communicational auditing generates distrust even in technologically advanced organizations.

Finally, SO5 represents the main contribution of the study: the proposal of a responsible AI implementation model structured around five key dimensions—transparency, human oversight, informed consent, persuasive ethics, and critical education—which reinforces the principles of the European AI Act and the Spanish Digital Rights Charter (Government of Spain, 2021). This model aligns with the broader shift toward trustworthy and socially responsible AI, offering an operational framework for communication and marketing professionals.

From an applied perspective, the study recommends specific actions: designing auditable campaigns that explicitly communicate the use of AI, incorporating human review mechanisms throughout all stages of persuasive processes, promoting algorithmic literacy within marketing teams, and developing ethical codes for automated communication. These measures strengthen social legitimacy and reputational resilience in increasingly automated digital environments.

However, several limitations must be acknowledged. The sample exhibits a high digital maturity profile, a non-representative size, and limited sectoral diversity, which is concentrated in the Spanish context. This restricts the generalizability of the findings and highlights the need to compare results across international contexts with varying regulatory and cultural frameworks. Future research should explore longitudinal and transnational comparisons (e.g., Europe–Latin America, Europe–Asia) to assess the evolution of trust and perceived ethics in persuasive AI. Additionally, experimental and neurometric methodologies are recommended to analyze consumers’ emotional responses under varying conditions of transparency and algorithmic control.

Overall, this analytical block consolidates a conceptual synthesis: the effectiveness of AI in persuasive communication depends not only on its technical capacity, but on its ethical, communicative, and regulatory integration—establishing a hybrid model of AI + human oversight as the benchmark for digital trust.

6.3. Final Conclusion

This study demonstrates that artificial intelligence is no longer a peripheral tool, but has become a key actor in digital persuasive communication, particularly within the ecosystem of Spanish startups and SMEs. Its implementation has a positive impact on variables such as conversion, personalization, and customer satisfaction; however, its legitimacy relies more on ethical and communicative integration than on technical capacity alone.

The contrast between corporate discourse and consumer perception reveals a growing consensus: AI is valued only when deployed transparently, with human oversight, and in ways that respect user autonomy. This finding is consistent with Longoni et al. (2019) and Afroogh et al. (2024), who argue that trust in intelligent systems is grounded in explainability and meaningful human supervision.

The main contribution of this study lies in the proposal of a responsible model for AI implementation in marketing, developed from empirical evidence and structured into five core dimensions: transparency, human oversight, informed consent, persuasive ethics, and critical education. This model helps bridge the gap between technical efficiency and social legitimacy, aligning with the principles of the European AI Act (Regulation 2024/1689) and the Spanish Digital Rights Charter (Government of Spain, 2021), which reinforce the values of transparency, accountability, and protection of digital autonomy.

In sum, artificial intelligence is consolidating its role as a driving force of contemporary persuasive communication. However, its legitimacy will depend on organizations’ ability to integrate ethics, transparency, and human control into every stage of interaction. This study lays the groundwork for a more transparent, human-centered, and trust-oriented approach to digital marketing—one that aligns with current regulatory demands and the social expectations of the emerging algorithmic era.

Ethics and Transparency

Acknowledgments

We express our gratitude to Universidad Camilo José Cela for the institutional support provided during the development of this study. We also thank the company Proofers for translating the manuscript. Finally, we extend our appreciation to the professionals and organizations who participated in the interviews, whose collaboration and expertise were essential for the development of the results.

Conflicts of Interest

The authors declare that they have no conflicts of interest regarding the content of this article.

Funding

This research did not receive specific funding from any public or private organization.

Author Contributions

|

Contribution |

Author 1 |

Author 2 |

Author 3 |

Author 4 |

|

Conceptualization |

X |

X |

|

|

|

Data curation |

X |

|

|

|

|

Formal Analysis |

X |

X |

|

|

|

Funding acquisition |

|

X |

|

|

|

Investigation |

X |

|

|

|

|

Methodology |

X |

|

|

|

|

Project administration |

|

X |

|

|

|

Resources |

|

X |

|

|

|

Software |

X |

|

|

|

|

Supervision |

|

X |

|

|

|

Validation |

|

X |

|

|

|

Visualization |

X |

X |

|

|

|

Writing - original draft |

X |

|

|

|

|

Writing - review &editing |

|

X |

|

|

Data Availability

The data supporting the findings of this study are available from the authors upon reasonable request.

References

Afroogh, S., Akbari, A., Malone, E. et al. (2024). Trust in AI: progress, challenges, and future directions. Humanities and Social Sciences Communications, 11, 1568. https://doi.org/10.1057/s41599-024-04044-8

Bryman, A. (2016). Social Research Methods (5th ed.). London: Oxford University Press.

Comisión Europea (2020). Guía del usuario sobre la

definición del concepto de pyme. Oficina de Publicaciones de la Unión

Europea.

https://tinyurl.com/42u68d2h

Creswell, J. W. & Plano Clark, V. L. (2017). Designing and Conducting Mixed Methods Research (3ª ed.). SAGE Publications.

Davenport, T., Guha, A., Grewal, D. & Bressgott, T. (2020). How artificial intelligence will change the future of marketing. Journal

of the Academy of Marketing Science, 48, 24-42.

https://doi.org/10.1007/s11747-019-00696-0

Eubanks, V. (2018). Automating Inequality: How High-Tech Tools Profile, Police, and Punish the Poor. St. Martin’s Press.

European Court of Auditors (2024). Special

report 08/2024: EU Artificial intelligence ambition. European Union Publications.

https://tinyurl.com/2fuzenny

European Commission (2024). More support for artificial intelligence start-ups to boost innovation. [Press release]. https://tinyurl.com/4hhyzz7t

European Union (2024). Regulation

(EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024

laying down harmonised rules on artificial intelligence (Artificial

Intelligence Act). Official Journal of the European

Union, L (12 July 2024).

https://eur-lex.europa.eu/eli/reg/2024/1689/oj

Eurostat (2025). Usage of AI technologies increasing in EU enterprises. European Commission. https://tinyurl.com/2edbym55

Fernández-Fernández, J. L. & Pinillos, A. (2011). De la RSC a la sostenibilidad corporativa: Una evolución necesaria para la creación de valor. Harvard Deusto Business Review, 204, 50-57.

Floridi, L., Cowls, J., Beltrametti, M., Chatila, R., Chazerand, P., Dignum, V. ... & Vayena, E. (2018). AI4People—An ethical framework for a good AI society: Opportunities, risks, principles, and recommendations. Minds and Machines, 28(4), 689707. https://doi.org/10.1007/s11023-018-9482-5

Gobierno de España (2021). Carta de Derechos Digitales. Secretaría de Estado de Digitalización e Inteligencia Artificial. https://tinyurl.com/zddf5vta

Grewal, D. & Roggeveen, A. L. (2017). The future of retailing. Journal of Retailing, 93(1), 1-6. https://doi.org/10.1016/j.jretai.2016.12.008

Gunning, D. & Aha, D. W. (2019). DARPA’s explainable artificial intelligence (XAI) program. AI Magazine, 40(2), 44-58. https://doi.org/10.1609/aimag.v40i2.2850

Hair, J. F., Risher, J. J., Sarstedt, M. & Ringle, C. M. (2019). When to use and how to report the results of PLS-SEM. European Business Review, 31(1), 2-24. https://doi.org/10.1108/EBR-11-2018-0203

Hernández-Sampieri, R., Fernández-Collado, C. & Baptista-Lucio, P. (2014). Metodología de la investigación (6ª ed.). McGraw-Hill.

IndesIA (2024). Barómetro de adopción de la inteligencia artificial en las pymes españolas: Edición 2024. Asociación para la Digitalización de la Industria Española. https://www.indesia.org/barometro-ia-v8-1/

Jauregui Caballero, A. & Ortega Ponce, C. (2020). Transmedia storytelling in the social appropriation of knowledge. Revista Latina de Comunicación Social, 77, 357-372. https://doi.org/10.4185/RLCS-2020-1462

Kaplan, Andreas & Haenlein, Michael (2019). Rulers of the world, unite! The challenges and opportunities of artificial intelligence. Business Horizons, 63(1), 37-50. https://doi.org/ 63. 10.1016/j.bushor.2019.09.003

Kumar, V., Ramachandran, D. & Kumar, B. (2021). Influence of new-age technologies on marketing: A research agenda. Journal of Business Research, 125, 864-877. https://doi.org/10.1016/j.jbusres.2020.01.007

Lee, M. C. M., Scheepers, H., Lui, A. K. H. & Ngai, E. W. T. (2023). The implementation of artificial intelligence in organizations: A systematic literature review. Information & Management, 60(5), 103816. https://doi.org/10.1016/j.im.2023.103816

Longoni, C., Bonezzi, A. & Morewedge, C. K. (2019). Resistance to medical artificial intelligence. Journal of Consumer Research, 46(4), 629-650. https://doi.org/10.1093/jcr/ucz013

Mittelstadt, B. D., Allo, P., Taddeo, M., Wachter, S. & Floridi, L. (2016). The ethics of algorithms: Mapping the debate. Big Data & Society, 3(2). https://doi.org/10.1177/2053951716679679

Nagamini, E. & Aguaded, I. (2018). La educomunicación en el contexto de las nuevas dinámicas discursivas mediáticas. Revista Mediterránea de Comunicación, 9(2), 119-121. https://doi.org/10.14198/MEDCOM2018.9.2.27

Ngo, V. M. (2025). Balancing AI transparency: Trust, Certainty, and Adoption. Information Development, 0(0). https://doi.org/10.1177/02666669251346124

Raji, I. D., Smart, A., White, R. N., Mitchell, M., Gebru, T., Hutchinson, B. ... & Barnes, P. (2020). Closing the AI accountability gap: Defining an end-to-end framework for internal algorithmic auditing. In Proceedings of the 2020 Conference on Fairness, Accountability, and Transparency (FAT '20) (pp. 33-44). Association for Computing Machinery. https://doi.org/10.1145/3351095.3372873

Red.es (2023). La transformación digital en la pyme española: Informe de resultados del Programa Oficinas Acelera pyme. Entidad Pública Empresarial Red.es. https://tinyurl.com/73uh5ux4

Ries, E. (2011). The Lean Startup: How Today's Entrepreneurs Use Continuous Innovation to Create Radically Successful Businesses. Crown Business.

Russell, S. J. & Norvig, P. (2020). Artificial Intelligence: A Modern Approach (4ª ed.). Pearson Education.

Sebastião, S. & Dias, D. (2025). AI Transparency: A Conceptual, Normative, and Practical Frame Analysis. Media and Communication, 13, Article 9419. https://doi.org/10.17645/mac.9419

Sundar, S. S. (2020). Rise of machine agency: A framework for studying the psychology of human–AI interaction. Journal of Computer-Mediated Communication, 25(1), 74-88. https://doi.org/10.1093/jcmc/zmz026

Suraña‐Sánchez, C. & Aramendia‐Muneta, M. E. (2024). Impact of artificial intelligence on customer engagement and advertising engagement: A review and future research agenda. International Journal of Consumer Studies, 48(3), e13027. https://doi.org/10.1111/ijcs.13027

Tadimarri, A., Gurusamy, A., Sharma, K. K. & Jangoan, S. (2024). AI-powered marketing: Transforming consumer engagement and brand growth. International Journal for Multidisciplinary Research, 6(2). https://doi.org/10.36948/ijfmr.2024.v06i02.14595

Torres-Toukoumidis, Á., Santín Picoita, F. & Henríquez Mendoza, E. (2024). Inteligencia artificial y educomunicación. https://doi.org/10.52495/c2.emcs.23.ti12

Yin, R. K. (2017). Case Study Research and Applications: Design and Methods. SAGE Publications.

Zarouali, B., Boerman, S. C., Voorveld, H. A. M. & van Noort, G. (2022). The algorithmic persuasion framework in online communication: Conceptualization and a future research agenda. Internet Research, 32(4), 1076-1096. https://doi.org/10.1108/INTR-01-2021-0049

Zozaya Durazo, L. D., Feijoo Fernández, B. & Sádaba Chalezquer, C. (2022). Análisis de la capacidad de menores en España para reconocer los contenidos comerciales publicados por influencers. Revista de Comunicación, 21(2), 307-319. https://doi.org/10.26441/RC21.2-2022-A15